Nvidia Compiler and Inference API¶

Attention

Access to Qlip requires an API token from the TheStage AI Platform and additional access, which can be requested by contacting frameworks@thestage.ai.

Here we will cover the following topics:

Overview¶

Main pipeline for compiling and running inference of PyTorch models with Qlip on Nvidia GPUs:

Compilation workflow

Step 1: Importing Required Modules and Model Preparation

Obtain a PyTorch model.

Initialize compilation manager

NvidiaCompileManager.

Step 2: Setting up the model for compilation

Specify builder configuration

NvidiaBuilderConfig.- Set up the submodules for compilation

setup_modules()setup_model()

Step 3: Capturing shape profiles

Obtain context manager

ShapeProfileManagerwithshape_profile().Run examples with different input shapes to capture shapes.

Step 4: Tracing the Model and Compilation

Compile the model/submodules with

compile().

Step 5: Running Inference

Specify inference session configuration

NvidiaSessionConfig.- Set up inference manager

NvidiaInferenceManager. from workspace

from compile manager:

from_compilemanager()

- Set up inference manager

- Set up the submodules or the whole model for inference

setup_model()setup_modules()

Run inference of the compiled model.

Why use Qlip compiler for Nvidia?

Significant acceleration of inference on NVIDIA GPUs.

Not JIT compilation. Compiled models can be saved to disk and reused with minimal cold start time.

Support for dynamic shapes and optimization for specific performance-critical inputs.

Allows mixing PyTorch code and multiple compiled models in a single pipeline with full memory reuse.

Natively supports compilation of models with quantized weights and activations produced by Quantization API and Quantization Algorithms.

Supports compilation of models by blocks, allowing optimization of specific parts of the model separately and reducing memory usage during compilation.

Supports compilation of models to

float32,float16andbfloat16data types.Supports compilation of mixed-precision models mixing w8a8, w4a16, w16a16 and

float16,bfloat16data types.

API Reference¶

Nvidia backend is based on TensorRT, CUDA libraries and custom TheStage AI kernels.

Note

Compiler supports TensorRT 10.6 and higher versions.

Base Classes¶

Compiled module class for compilation. |

|

Shape profile manager controls shape collection. |

|

Compiled module for inference. |

Nvidia-specific Classes¶

Nvidia manager for compilation. |

|

Holds configuration options for the Nvidia builder. |

|

Nvidia inference manager. |

|

Configuration for Nvidia Inference Session. |

|

Nvidia memory manager. |

CUDA Graph¶

Manages the cuda graphs for a model's method. |

PyTorch Model Compilation and Inference¶

This section covers how to compile and run inference of PyTorch models using Qlip on Nvidia GPUs.

Basic Model Compilation¶

The basic compilation workflow for Nvidia:

Initialize the compile manager with

NvidiaCompileManagerSet up the model for compilation

Capture shape profiles by running example inputs

Compile the model

Run inference with the compiled model

In this example, we have a Resnet-18 model and wish to use it for image classification. We wish to support inference on different batch sizes from 1 to 64.

First of all, we obtain the model in float16 data type and set up the model for compilation

with NvidiaCompileManager.

import qlip

import torch

import torchvision.models as models

from qlip.compiler.nvidia import NvidiaCompileManager

from qlip.inference.nvidia import NvidiaInferenceManager

# select fastest device by default

device = qlip.node.device

dtype = torch.float16

# creating models for compilation and comparison

model_orig = models.resnet18(pretrained=True).to(device).to(dtype)

model_qlip = models.resnet18(pretrained=True).to(device).to(dtype)

model_orig.eval()

model_qlip.eval()

# different input for tracing

input_1 = torch.randn(1, 3, 224, 224).to(device).to(dtype)

input_2 = torch.randn(64, 3, 224, 224).to(device).to(dtype)

# setup model for compilation

cmanager = NvidiaCompileManager(model_qlip, workspace="model_qlip")

model_qlip = cmanager.setup_model(dtype=dtype)

To compile a model with different input shapes, you can use a dynamic shapes strategy. For that, you need to trace the model with different input shapes. Using two inputs with batch sizes 1 and 64 and then compiling the model with a dynamic shape profile, you will be able to run inference on any input shape with batch size between 1 and 64.

# trace different input shapes which we wish to cover

with cmanager.shape_profile(type="dynamic"):

model_qlip(input_1)

model_qlip(input_2)

# compile model

cmanager.compile()

Now we can run inference on any input shape with batch size between 1 and 64 and compare results with the original model.

import time

input_3 = torch.randn(32, 3, 224, 224).to(device).to(dtype)

def benchmark(model, input, n_repeat=10):

t = time.time()

qlip.node.backend.synchronize()

with torch.no_grad():

for i in range(n_repeat):

output = model(input)

qlip.node.backend.synchronize()

return time.time() - t, output

t_qlip, output_qlip = benchmark(model_qlip, input_3)

t_orig, output_orig = benchmark(model_orig, input_3)

print("DIFF: ", (output_qlip - output_orig).abs().median())

print(f"Qlip model inference time: {t_qlip:.4f} seconds")

print(f"Original model inference time: {t_orig:.4f} seconds")

Output on Nvidia L40s GPU:

DIFF: tensor(0.0020, device='cuda:0', dtype=torch.float16)

Qlip model inference time: 0.0142 seconds

Original model inference time: 0.0760 seconds

To delete a compiled model and free memory, you have to delete the compiled module and the compile manager:

del model_qlip

del cmanager

Serialization of Compiled Models¶

The compile manager saves the compiled model to the workspace directory

(controlled by the save_compiled argument of cm.compile(), True by default).

Nvidia backend saves compiled engines in the encrypted .qlip format.

An encryption key (qlip_key.bin) is saved in the workspace directory

and is required to load the models at inference time.

Inference of Compiled Models¶

You can run the compiled model directly with the default session configuration.

Advanced inference options are available through the inference manager NvidiaInferenceManager. It can be initialized from the compile manager with from_compilemanager().

To load the compiled model from the workspace, you can use the same class NvidiaInferenceManager.

To set up the model from the workspace, you can use the following methods:

setup_model()- to set up the whole model from the workspace.setup_modules()- to set up the modules from the workspace.auto_setup()- to automatically set up the model and/or submodules from the workspace.

from qlip.inference.nvidia import NvidiaInferenceManager, NvidiaSessionConfig

# Initialize inference manager with session configuration

config = NvidiaSessionConfig(use_cuda_graph=True)

imanager = NvidiaInferenceManager.from_compilemanager(cmanager, config=config)

# load compiled model from the workspace

imanager = NvidiaInferenceManager(model, workspace="model_qlip")

model_qlip = imanager.auto_setup()

Multiple Data Types in Inputs¶

Models can accept inputs with different data types. For instance, you may want to use float32 for part of inputs and float16 for the rest.

This is useful when you have precision-sensitive operations that should be in float32,

then cast to float16 and used in the rest of the model. Such cases can be automatically handled by Qlip.

In practice, such a situation happens in the FLUX model, when we keep rotary embeddings in float32.

Example¶

A model with multiple inputs and different data types is defined as follows:

class MultiInputModel(torch.nn.Module):

def forward(self, scale, input_1, input_2):

input_1 = torch.sigmoid(input_1*scale).to(input_2.dtype)

return input_1 + input_2.unsqueeze(-1).unsqueeze(-1)

Model compilation and comparison with original model:

import qlip

import torch

from qlip.compiler.nvidia import NvidiaCompileManager

device = qlip.node.device

dtype = torch.float16

model = MultiInputModel().to(device).to(dtype)

cmanager = NvidiaCompileManager(

model,

workspace="multiinput_model_qlip"

)

# Setup compiled model (but tracing is not yet performed)

model_qlip = cmanager.setup_model(dtype=dtype)

# Prepare inputs with custom dtypes

input_1 = torch.randn(1, 16, 64, 64).to(device).to(torch.float32)

input_2 = torch.randn(1, 16).to(device).to(dtype)

scale = torch.tensor(0.001).to(device).to(torch.float32)

with cmanager.shape_profile("static"):

model_qlip(scale, input_1, input_2)

# Compile the traced model

cmanager.compile()

# Run inference with compiled model

output = model_qlip(scale, input_1, input_2)

Working with Multiple Shapes¶

We already saw how to compile a model with dynamic shapes, but there are a few more options to specify input shapes. Generally, there are two options to specify input shapes:

Dynamic shape profile: Use minimum and maximum shapes observed during tracing.

Static shape profiles: Use a list of shapes that were observed during tracing. This is useful when you have a fixed set of shapes that you want to support with maximum performance.

The compile manager provides a context manager ShapeProfileManager to keep track of input shapes.

It can also skip n first shapes during tracking.

Dynamic shape profile

A single call of the ShapeProfileManager context manager with dynamic type will capture one dynamic shape profile.

with compile_manager.shape_profile(type="dynamic"):

model(torch.randn(1, 3, 224, 224).to(device).to(dtype))

model(torch.randn(4, 3, 224, 224).to(device).to(dtype))

# ... add more shapes as desired

In this case, we captured minimum batch size of 1 and maximum batch size of 4. The compiled model will work with any input shape with batch size between 1 and 4.

The opt parameter controls which shape is used as the optimal shape for TensorRT optimization:

"mode"(default) — the most frequently seen shape is used as the optimal shape."min"— the minimum shape is used as the optimal shape."max"— the maximum shape is used as the optimal shape.

with cmanager.shape_profile(type="dynamic", opt="min"):

model_qlip(input_1)

model_qlip(input_2)

Static shape profiles

A single call of the ShapeProfileManager context manager with static type will capture as many static shape profiles as forward calls are made in the context.

# Trace with different fixed shapes in static mode

with cmanager.shape_profile(type="static"):

model_qlip(torch.randn(1, 3, 224, 224).to(device).to(dtype))

model_qlip(torch.randn(4, 3, 224, 224).to(device).to(dtype))

model_qlip(torch.randn(8, 3, 224, 224).to(device).to(dtype))

# ... add more shapes as needed

In the case above, we traced the model with three different shapes. Note that the compiled model will only work with these shapes. You can do the same for a model with multiple inputs. Take into account that compilation time increases with the number of supported shapes.

Skip n shapes

Skipping first n shapes during tracking is useful when you have multiple calls of the compiled module with different shapes.

For example, it is common for an LLM to have prefill and decode stages with different shapes.

To track shapes only for the decode stage, you can skip the prefill stage, which takes one forward pass.

Here is an example of how to obtain a dynamic shape profile with batch sizes from 1 to 4 only for the decode stage:

inputs_bs1 = {"input_ids": torch.randint(0, 1000, (1, 1))}

inputs_bs4 = {"input_ids": torch.randint(0, 1000, (4, 1))}

with cmanager.shape_profile(type="dynamic") as sp:

with sp.skip_n(n=1):

model.generate(**inputs_bs1)

with sp.skip_n(n=1):

model.generate(**inputs_bs4)

# ... add more shapes as needed

Fake mode

You can enable fake mode to skip actual computation during shape profiling. This is useful when you want to profile the model without actually running it, e.g. to avoid out of memory errors for large models.

with cmanager.shape_profile(type="dynamic", fake_mode=True):

model(torch.randn(1, 3, 224, 224).to(device).to(dtype))

model(torch.randn(4, 3, 224, 224).to(device).to(dtype))

# ... add more shapes as needed

Mixing dynamic and static profiles

Nvidia backend allows mixing multiple dynamic and static shape profiles for maximum flexibility.

Compilation of Quantized Models¶

The following quantization types are supported by Nvidia:

Supported quantization types

w8a8(both weights and activations are quantized)Quantization with

int8data types for linear and convolution layers. Supported on almost all NVIDIA GPUs from Turing to Blackwell architecture. For instance, RTX 2060, RTX 2080, RTX 3090, A100, L40s, H100, B200 are supported.

w8a8quantization withfloat8_e4m3fnfor linear and convolution layers.This is a new quantization type that is supported by Nvidia GPUs starting from Ada Lovelace architecture. For instance, GPUs: L40s, RTX 4090, H100, H200, B200 are supported.

w4a16block quantization of weights withint4data type and no quantization of activations.This type of quantization helps reduce memory usage for large models and can also accelerate inference for small batches, when weight memory transfer takes a significant part of the inference time.

w4a4withfloat4data type.Supported starting from Nvidia Blackwell. Will be supported by Qlip in a future release.

To quantize models with the listed types, we can use pre-defined configurations for QuantizationManager:

Predefined quantization configurations for Nvidia

NVIDIA_INT_W8A8- for staticw8a8quantization withint8data type.NVIDIA_FLOAT_W8A8- for staticw8a8quantization withfloat8data type.NVIDIA_INT_W4WO- for weight only quantization withint4data type.NVIDIA_INT_W8A8_PER_TOKEN_DYNAMIC- for dynamic per-tokenw8a8quantization withint8data type.NVIDIA_FLOAT_W8A8_PER_TOKEN_DYNAMIC- for dynamic per-tokenw8a8quantization withfloat8data type.

Let’s quantize a model with w8a8 quantization using int8 data type.

For quantized inference, we need to quantize weights and activations in linear and convolution layers.

from qlip.quantization import QuantizationManager

from qlip.compiler.nvidia import NVIDIA_INT_W8A8, NVIDIA_FLOAT_W8A8, NVIDIA_INT_W4WO

device = qlip.node.device

dtype = torch.bfloat16

# initialize model

model = create_my_model().to(device).to(dtype)

# Prepare quantization wrapper

quantizer = QuantizationManager()

# Select modules to quantize (Linear in this case)

modules = [

mod for mod in model.modules()

if isinstance(mod, torch.nn.Linear)

]

quantizer.setup_modules(

modules,

# quantizing each row of weights separately

weights_granularity="per-channel",

# number of batches for calibration of quantization ranges

calibration_iterations=1,

# Predefined quantization parameters for NVIDIA INT8 W8A8

**NVIDIA_INT_W8A8

)

# run calibration to estimate activation ranges

model(input)

model.eval()

Now we can compile this quantized model with Qlip compiler:

from qlip.compiler.nvidia import NvidiaBuilderConfig, NvidiaCompileManager

# Setup compile manager for quantized model

compile_manager = NvidiaCompileManager(model, workspace="model_qlip")

model_qlip = compile_manager.setup_model(dtype=dtype)

# Trace with example inputs

with compile_manager.shape_profile("static"):

model_qlip(input)

# Compile the model

compile_manager.compile()

# Run inference

output = model_qlip(input)

Compilation of Components and Submodules¶

You can compile specific parts of the model separately

using the setup_modules() method.

To compile one component, use the component parameter. You can also provide a custom name for the component with the component_name parameter.

cmanager.setup_modules(component="encoder.embeddings", component_name="embeddings")

You can also compile specific modules by their types with the module_types parameter.

cmanager.setup_modules(module_types=[torch.nn.Linear])

If you want to compile specific modules by their names, you can use the modules parameter.

cmanager.setup_modules(modules=["encoder", "decoder"])

You can also address submodules within a component with the component parameter.

cmanager.setup_modules(component="encoder.embeddings", module_types=[torch.nn.Linear])

cmanager.setup_modules(component="encoder", modules=["embeddings", "transformer"])

There are cases when you want to compile only specific parts of the model. For instance:

It’s hard to trace the graph of the whole model as it contains unsupported operations.

It’s hard to compile the whole model due to memory limitations.

Mix

int8andfloat8data types in the model.

At the same time, each compiled module requires its own memory for execution. Qlip solves this problem in two ways:

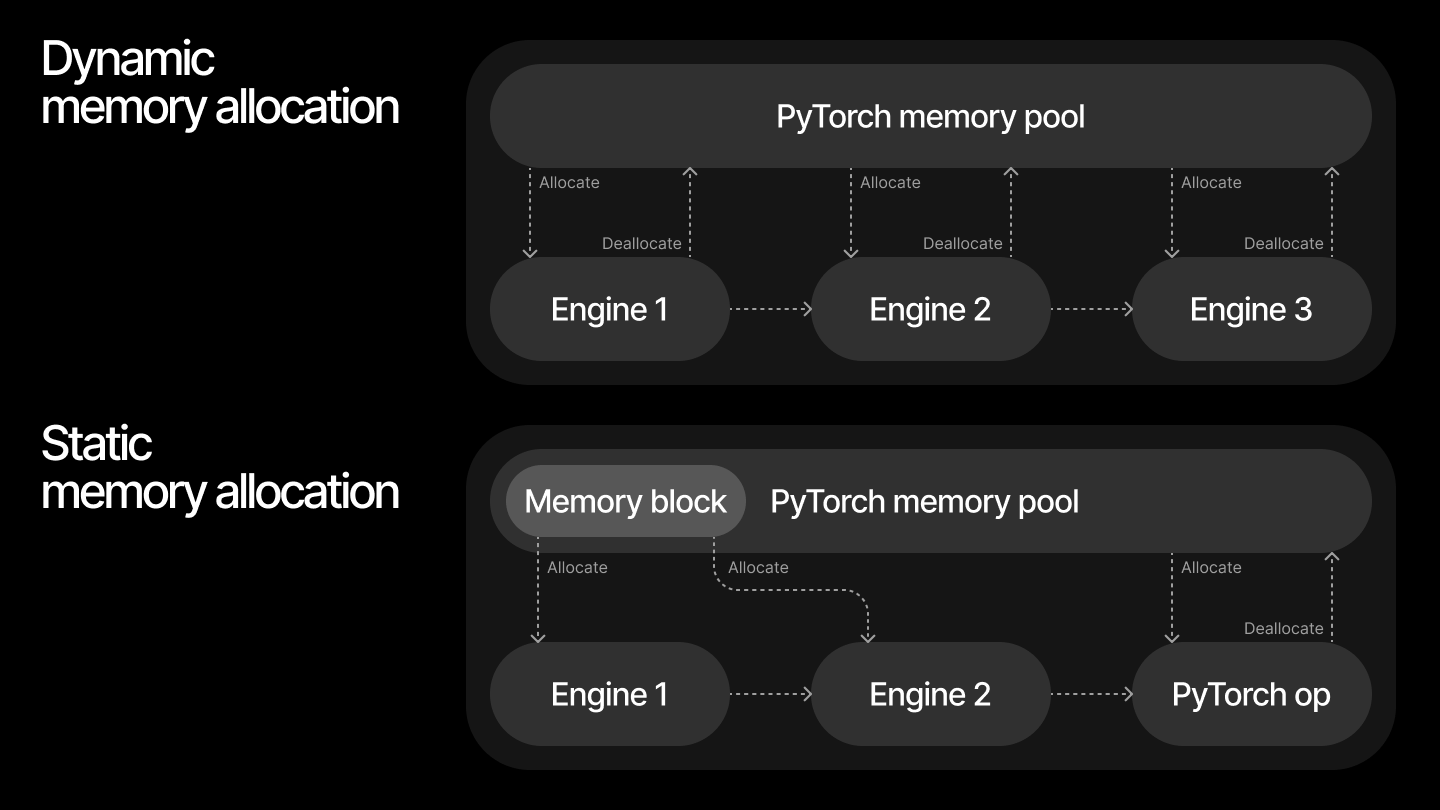

Memory allocation strategies

Dynamic allocation of memory for compiled modules from Pytorch memory pool. This is straightforward and works well for most cases, but can lead to memory fragmentation.

Static allocation allows sharing memory for compiled modules with

NvidiaMemoryManager. For the simplest case, you can allocate device memory for all compiled modules with theallocate_max_device_memory()method. Internally, it usesNvidiaMemoryManagerwhich can be obtained withget_memory_manager()method. This approach is more efficient for inference of multiple models in the same process.

When comparing the two approaches for handling maximum possible input sizes, dynamic memory allocation typically results in higher overall memory usage. This is because memory is returned to the pool with some delay, and subsequent blocks may allocate additional memory before the previous memory is fully released. Additionally, running inference on varying input sizes can cause memory fragmentation.

In contrast, static memory allocation enables more efficient memory reuse across compiled modules, reducing total memory consumption. The Qlip memory manager analyzes the input, output, and intermediate tensors of all compiled modules and allocates a single contiguous memory block to accommodate them.

Let’s imagine that we wish to compile 2 blocks in the model separately:

import torch

import qlip

from qlip.compiler.nvidia import NvidiaCompileManager

...

cmanager = NvidiaCompileManager(model, workspace="model_qlip")

cmanager.setup_modules(["block_1", "block_2"], dtype=dtype)

# tracing model with example inputs

with cmanager.shape_profile("static"):

model(input)

cmanager.compile()

output = model(input_3)

To use the same memory for compiled blocks, you need to allocate it via NvidiaInferenceManager:

imanager = NvidiaInferenceManager.from_compilemanager(cmanager)

imanager.allocate_max_device_memory()

output = model(input)

Using Pre-exported ONNX Models¶

If you already have an ONNX model exported from your PyTorch model, you can pass it directly to skip the ONNX export step during compilation:

cmanager = NvidiaCompileManager(model, workspace="model_qlip")

model_qlip = cmanager.setup_model(onnx_model="path/to/model.onnx", dtype=dtype)

with cmanager.shape_profile("static"):

model_qlip(input)

cmanager.compile()

You can also pass onnx_model when setting up individual components:

cmanager.setup_modules(component="encoder", onnx_model="path/to/encoder.onnx")

Enabling and Disabling Compiled Modules¶

After compilation, you can toggle compiled modules on and off without removing them. This is useful for debugging or comparing compiled vs. original model outputs.

# Disable compiled computation (use original PyTorch modules)

cmanager.enable(False)

output_original = model(input)

# Re-enable compiled computation

cmanager.enable(True)

output_compiled = model(input)

To permanently restore the original modules and remove compiled computation:

cmanager.remove()

# model now uses original PyTorch modules

Weight Streaming¶

For large models that don’t fit in GPU memory during inference, TensorRT supports weight streaming. This requires a specific builder flag during compilation and a session config option at inference time.

Compilation with weight streaming:

from qlip.compiler.nvidia import NvidiaBuilderConfig, NvidiaCompileManager

config = NvidiaBuilderConfig()

config.add_builder_flag("WEIGHT_STREAMING")

cmanager = NvidiaCompileManager(model, workspace="model_qlip")

model_qlip = cmanager.setup_model(builder_config=config, dtype=dtype)

Note

Adding the WEIGHT_STREAMING flag automatically sets STRONGLY_TYPED as well.

Inference with weight streaming:

from qlip.inference.nvidia import NvidiaSessionConfig

# Stream all weights from host memory (0 = stream everything)

config = NvidiaSessionConfig(weight_streaming_budget_v2=0)

# Default behavior (no streaming, -1 = disabled)

config = NvidiaSessionConfig(weight_streaming_budget_v2=-1)

CUDA Graph Capture¶

Qlip provides CudaGraphManager for PyTorch-level CUDA graph capture.

This is different from the TensorRT-level CUDA graphs enabled via NvidiaSessionConfig(use_cuda_graph=True) —

CudaGraphManager captures the entire PyTorch forward pass including multiple compiled modules and surrounding PyTorch operations.

For each unique combination of input shapes, CudaGraphManager captures and stores a separate CUDA graph.

On subsequent calls with the same shapes, the stored graph is replayed without re-capturing.

If a new input size exceeds previously allocated memory, the graph is reset and re-captured.

Tip

Do the first warmup run with the largest shapes for all inputs. This ensures that enough memory is allocated upfront so that smaller shapes can reuse the same allocations without triggering a reset.

from qlip.inference.cuda_graph import CudaGraphManager, no_cuda_graph

# Capture CUDA graph for the model's forward method

CudaGraphManager.compile(model)

# First call with the largest expected shape to warm up and allocate memory

output = model(large_input)

# Subsequent calls with smaller shapes reuse allocations

output = model(small_input)

# Temporarily disable CUDA graph (e.g., for debugging)

with no_cuda_graph():

output = model(input)

# Permanently remove CUDA graph and restore original method

CudaGraphManager.restore(model)

Note

CudaGraphManager automatically resets the graph when non-tensor inputs change

or when input sizes exceed previously allocated memory.

Troubleshooting and Limitations¶

This section covers common issues and limitations when using Qlip for model compilation and inference on Nvidia GPUs.

Handling input tuples, dictionaries and shape tensors¶

Models can accept multiple inputs as arguments and keyword arguments. Non-tensor inputs are interpreted as constant values.

Note

Qlip supports tuples and dictionaries as inputs for compilation and inference but only with a single level of nesting.

Note

Any input that can be converted to torch.Size will be interpreted as shape tensor for Nvidia backend, for example a tuple of integers.

However, it is only supported for TorchScript ONNX export.

The following example will work correctly with TorchScript ONNX export:

import torch

from qlip.compiler.nvidia import NvidiaCompileManager

class MultiInputModel(torch.nn.Module):

def forward(self, input_1, input_2, shape):

input_2 = input_2.reshape(*shape)

return input_1 + input_2

model = MultiInputModel().to(qlip.node.device)

cmanager = NvidiaCompileManager(model, workspace="model_workspace")

model_qlip = cmanager.setup_model()

input_1 = torch.randn(1, 16, 64, 64).to(qlip.node.device)

input_2 = torch.randn(1, 16).to(qlip.node.device)

shape = (1, 16, 1, 1)

# Shape profiling must happen before compilation

with cmanager.shape_profile("dynamic"):

model_qlip(input_1, input_2, shape)

cmanager.compile()

output = model_qlip(input_1, input_2, shape)

ONNX Export Options¶

Nvidia backend supports two ONNX exporters: TorchScript and Dynamo.

Feature |

TorchScript Export |

Dynamo Export |

|---|---|---|

Default for PyTorch |

<= 2.8 |

>= 2.9 |

Quantization |

torch<=2.8 |

yes |

Shape tensors |

Yes |

No |

You can specify the ONNX exporter to use for compilation with the dynamo parameter of the compile() method.

cmanager.compile(dynamo=True) # Force Dynamo export

cmanager.compile(dynamo=False) # Force TorchScript export

Inference on different Nvidia GPUs¶

A compiled model can be used only on the same GPU it was compiled for. To run inference on a different GPU, you need to compile the model again on that GPU.

TensorRT supports compilation to use a single engine on multiple GPUs, but we have found that it leads to significant performance degradation.

Cross-TensorRT version inference¶

Qlip also does not support inference of compiled models across different TensorRT versions. It is necessary to compile the model with the same TensorRT version as the one used for inference. We have also tested the TensorRT feature for loading engines with different TensorRT versions, but it leads to significant performance degradation and is not recommended.

Wrong shapes¶

When encountering shape-related error ValueError: Invalid input shape: no optimization profile defined for the given input shapes.

during inference, it usually means that the model was not compiled with the correct dynamic shapes or the input shapes do not match the expected shapes.

Compilation errors with linked axes¶

If compilation fails due to incompatible dynamic axes, it may be because the model has multiple inputs that share a dynamic dimension (e.g. the same batch size) but the compiler treats them as independent.

When using dynamic shape profiles, Qlip automatically generates unique names for each dynamic axis. By default, axes with the same dynamic dimension across different inputs are treated as independent. By assigning the same custom name to multiple axes, you tell the compiler that these axes are linked and always have the same value. This allows TensorRT to produce more efficient optimization profiles.

Use set_axes_names() before compilation to set custom axes names.

Mappings can be specified by module type or by module name:

cm.set_axes_names({

torch.nn.Linear: {

"input_0": "batch_size",

},

"decoder": {

"sample_0": "batch_size", # linked to the same "batch_size" axis above

"sample_2": "width",

"sample_3": "height",

},

})

Moving models between Nvidia GPUs on single machine¶

Currently, Qlip does not support moving compiled models between different GPUs. You can specify the device during compilation or model load.

from qlip.compiler.nvidia import NvidiaBuilderConfig, NvidiaCompileManager

cmanager = NvidiaCompileManager(

model,

workspace="model_workspace",

)

compiled_model = cmanager.setup_model()

cmanager.compile(device="cuda:0")

# or when loading a compiled model for inference:

from qlip.inference.nvidia import NvidiaInferenceManager

inference_manager = NvidiaInferenceManager(

model,

workspace="model_workspace",

)

loaded_model = inference_manager.setup_model(device="cuda:0")

Out of memory errors¶

OOM errors during compilation can be caused by several reasons:

Common causes of OOM errors

Large model size: The model is too large to fit into the available GPU memory. In this case, you can try to compile the model by blocks.

CPU offload: Offload the model to CPU during compilation to reduce GPU memory usage:

cmanager.compile(cpu_offload=True)

Specific kernels: Some operations in the model require more memory than others, leading to OOM errors.

Unload engines after compilation (keep_compiled=False): When compiling multiple modules serially,

accumulated engines can consume significant GPU memory. Passing keep_compiled=False to

compile() unloads each engine from GPU memory immediately

after compilation. Engines are still saved to the workspace and can be loaded later via

NvidiaInferenceManager.

cmanager.compile(keep_compiled=False)

Fake mode for shape profiling (fake_mode=True): Uses PyTorch’s FakeTensorMode to perform

shape profiling without actual computation or memory allocation. This is especially useful for large models

where even running a forward pass for shape capture can trigger OOM errors. See fake-mode for a full example.

with cmanager.shape_profile(type="dynamic", fake_mode=True):

model(input_1)

model(input_2)

Sometimes this can happen because TensorRT tries to profile kernels with float32 data type,

even if builder flags specify float16 or bfloat16. In this case, you can try to force the compiler to use

strongly typed kernels with set_strongly_typed():

from qlip.compiler.nvidia import NvidiaBuilderConfig, NvidiaCompileManager

nvidia_config = NvidiaBuilderConfig()

nvidia_config.set_strongly_typed()

cmanager = NvidiaCompileManager(model, workspace="model_workspace")

model_qlip = cmanager.setup_model(builder_config=nvidia_config)

Profiling and Debugging¶

TensorRT provides different levels of profiling verbosity for debugging compilation issues.

You can set this via NvidiaBuilderConfig:

from qlip.compiler.nvidia import NvidiaBuilderConfig

config = NvidiaBuilderConfig(profiling_verbosity="DETAILED")

# Values: "NONE", "DEFAULT", "DETAILED"

Using Polygraphy with Qlip Engines¶

Qlip provides integration with Polygraphy for inspecting compiled engines.

The qlip.inference.nvidia.polygraphy module patches Polygraphy to support the .qlip engine format.

python -m qlip.inference.nvidia.polygraphy inspect model path/to/model.qlip

- Nvidia Compiler and Inference API

- Overview

- API Reference

- PyTorch Model Compilation and Inference

- Basic Model Compilation

- Serialization of Compiled Models

- Inference of Compiled Models

- Multiple Data Types in Inputs

- Working with Multiple Shapes

- Compilation of Quantized Models

- Compilation of Components and Submodules

- Using Pre-exported ONNX Models

- Enabling and Disabling Compiled Modules

- Weight Streaming

- CUDA Graph Capture

- Troubleshooting and Limitations

- Handling input tuples, dictionaries and shape tensors

- ONNX Export Options

- Inference on different Nvidia GPUs

- Cross-TensorRT version inference

- Wrong shapes

- Compilation errors with linked axes

- Moving models between Nvidia GPUs on single machine

- Out of memory errors

- Profiling and Debugging

- Using Polygraphy with Qlip Engines